|

1/1/2024 0 Comments Data lake architecture

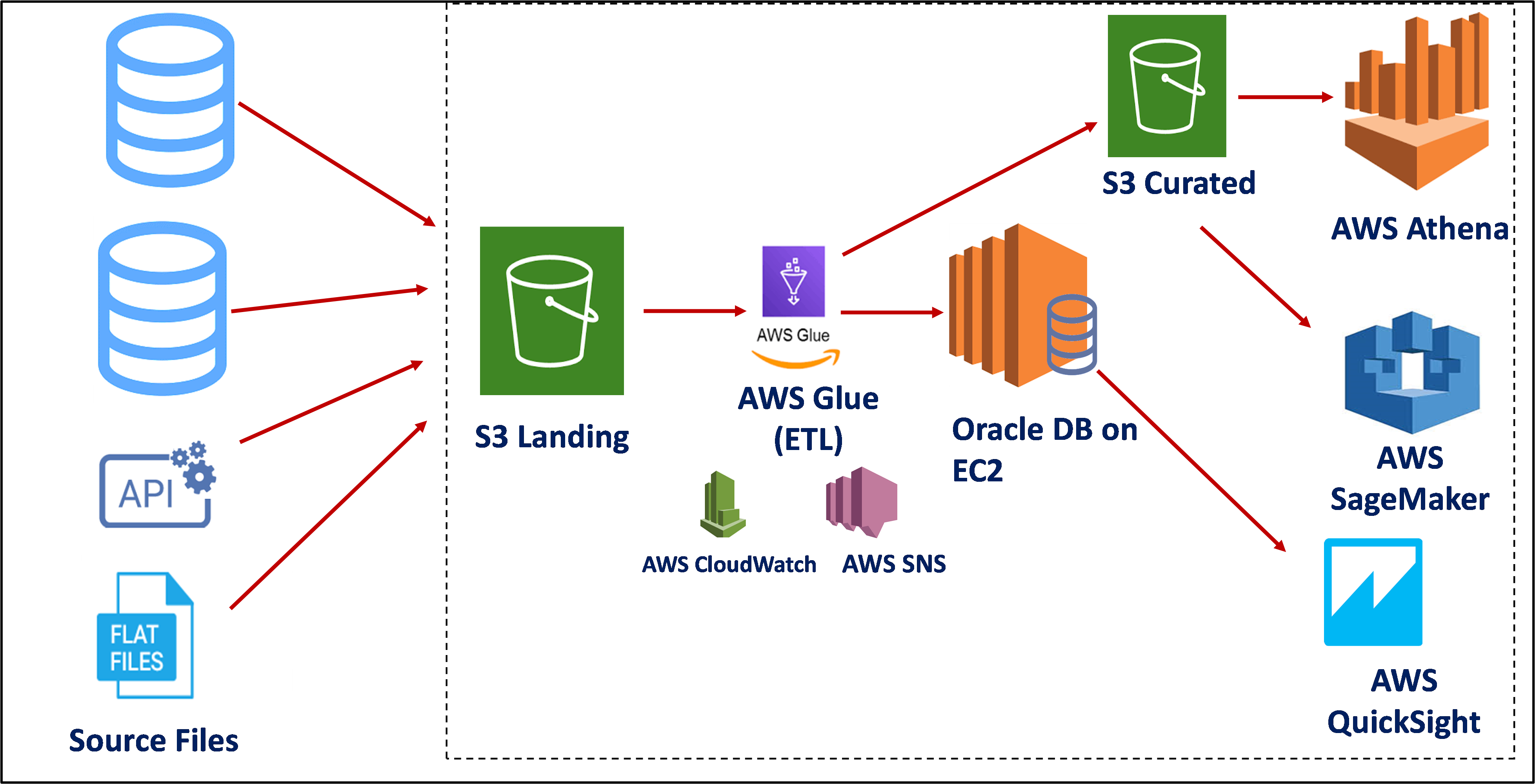

Data protection-security controls, data encryption, and automatic monitoring must be in place, and alerts should be raised when unauthorized parties access the data, or when authorized users perform suspicious activities.Data catalog-the data lake should provide a data catalog that enables the search and retrieval of data according to a data type or usage scenario.Data access-there should be a standardized data access process, used both by human users and integrated systems, which enables tracking of access and use of the data.Conventions-the data lake should, as much as possible, enforce agreed file types and naming conventions.It should be equipped with data profiling technology, to provide insights into data quality. Data classification and data profiling-the data lake should make it possible to classify data, by data types, content, usage scenarios, and possible user groups.Regardless of how you choose to implement a data lake, the following capabilities will help you keep it functional and make good use of its unstructured data: Most of the data in a data lake is unstructured and not designed to answer specific questions, but it is stored in a way that facilitates dynamic querying and analysis. Data transformed on demand-the data is transformed and structured according to the analysis requirements and queries being performed.Data is stored in its original form-after receiving the data from the source, the data is stored unconverted or with minimal treatment.All data is accepted to the data lake-it ingests and archives data from multiple sources, including structured, unstructured, raw, and processed data.However, there are three main principles that differentiate a data lake from other big data storage methods: Data Lake ArchitectureĪ data lake can have various types of physical architectures because it can be implemented using many different technologies. This often requires the use of analytics tools and frameworks, like Google BigQuery, Amazon Athena, or Apache Spark. Organizations typically use data lakes to store data for future or real-time analysis. Depending on the capabilities of the system you are using, you might be able to set up real-time data ingestion. You can simply integrate and store data as it streams in from multiple sources. For example, you can store unstructured data, as well as structured data, in your data lake.Ī data lake does not require any upfront work on the data. A data lake serves as a central repository used for storing several types of data, at scale.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed